03 · What I built

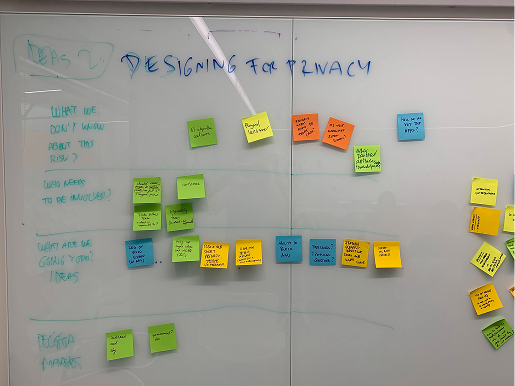

A workshop that converted risk into a named mitigation and an owner

The centrepiece was a hybrid workshop designed and facilitated on Miro, built around three named stages: lightning talks framing Cherry (Sky Live's internal codename) from multiple perspectives; a structured risk-mapping block using Ethical Explorer and Layers of Effect tailored to Sky Live; and a "What now?" stage that converted each named risk into actionable insight, a design mitigation, and an owner.

Stage 1, Lightning talks

Short framing talks giving every attendee the same picture of Cherry: what it is, what data it touches, what the customer perspective looks like, what the known constraints are.

Stage 2, Ethical Explorer + Layers of Effect

Teams ran each area of concern through Ethical Explorer to name the risk, then Layers of Effect to forecast first, second, and third-order consequences on customers, the business, and society.

Stage 3, What now? Actionable insights

Every named risk came out with a committed action, a named owner, and the people who still needed to be involved. No risk left the workshop unassigned.

The four questions each risk had to answer

What don't we know?

The honest gap. What the team could not yet answer about the risk, the dataset, the behaviour, or the customer.

What are we going to do about it?

The committed action. A concrete design, product, or engineering step, not a statement of intent.

Who are the decision-makers?

The people who could approve or block the action, named explicitly so the team knew who to go to.

Who else needs to be involved?

The cross-functional partners, legal, research, engineering, propositions, needed to make the mitigation real.

Five areas of concern, two I owned end to end

Privacy, in lieu of a physical shield

A camera-first device in the home introduced real risks: surveillance, stalking, hacking. These needed explicit design mitigations, not just policy statements. I owned this thread from risk framing through to the "Your privacy protected" onboarding and hardware mute-button messaging.

Inclusivity

Face detection reliant on ML is fallible and risks excluding users. Children's rights, positive self-image, and non-violent values needed active consideration. I owned this through to the no-beautifying-filters statement in Video Booth.

Trust, Bad actors, AI harm

The remaining threads, held across the DEN: the consent gap between what the service did and what customers understood it to do; Watch Together risks around inappropriate broadcasts and unsupervised children; and model bias from insufficiently diverse training data.

Artefacts beyond the workshop

Digital Ethics Framework

Built on Ethical Explorer and Layers of Effect as a Miro-based template for teams to adopt in their own projects. Served as a shared tool to spark discussion, align stakeholders, and surface the ethical impact of design decisions before they shipped. A workshop-outcomes deck captured the risks and initiatives for ongoing reference.

AI and Digital Ethics talks

A series of talks across Sky teams on how designers should approach AI responsibly. Covered practical AI use cases, machine learning concepts, model cards, and the UX challenges of AI-driven products, all pre-GenAI.